|

12/5/2023 0 Comments Ia writer github io

Personally, I’ve always used GitHub Pages and I’m still using it. To name a few: GitHub Pages or Netlify or Firebase Hosting. But how Hugo works is not the topic of this article, so if you are interested to know a bit more, I suggest you look over the documentation.Īs mentioned above, the final output of Hugo is a static website and there are many free solutions to host it. With Hugo, you can write articles or content with Markdown, and then Markdown pages are automatically transformed into HTML and CSS pages when you build the website. It is a powerful tool that lets you have a website up and running in just a few minutes. The site is built with Hugo, one of the most popular static site generator. A bit about tech stack #īefore speaking about the setup, I want to spend some words about the tech stack. I’m pretty confident I’ve ended up with something worth sharing. There’s no perfect solution and Future Me will most likely refactor and (over)re-engineer the current solution), I started to seek the “perfect” writing setup. After I finally landed on the “perfect” tech architecture (I know, I’m lying. After a short while spent on Medium, I decided I wanted to be the sole owner of my content, so I started experimenting with different solutions and ideas. Ingredients.It’s been a few years since I started writing this blog, and I quite like sharing my thoughts and experiences. # that allows us to use the same index in to the list for # this loop goes from 0 to the number of units instead over Summary = clean(content.find(class_="topnote"))įor unit in content.find_all("span", class_="quantity"):įor name in content.find_all("span", class_="ingredient-name"): # find_all get's all elements with this class, whereas find (above)įor note in content.find_all(class_="recipe-note-description"): Yield_time = clean(yield_.li.next_sibling.next_sibling.span.

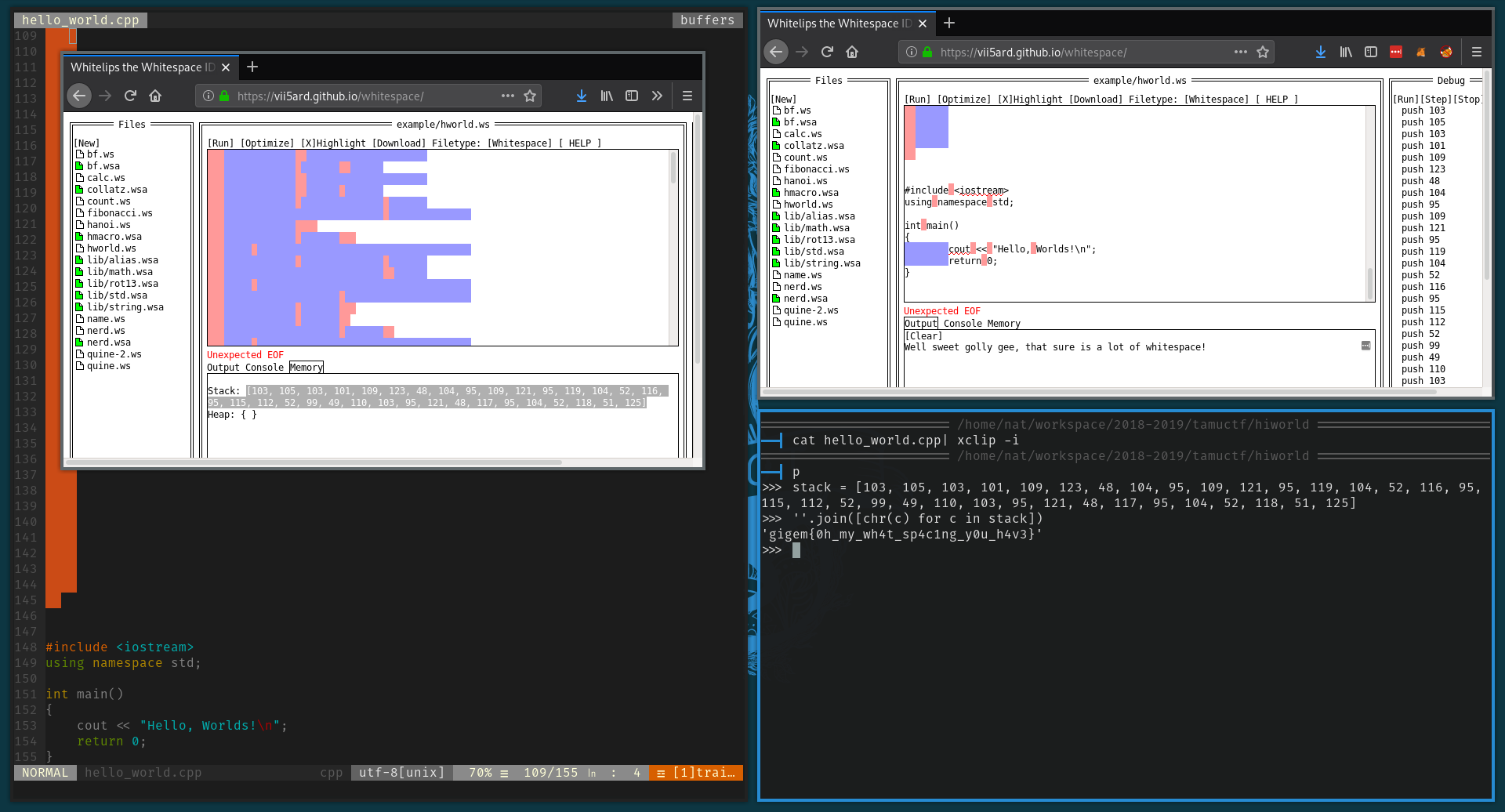

Yield_servings = clean(yield_.li.span.next_sibling.next_sibling) # can manually move up/down/sideways in the parsed document # the class "recipe-time-yield" contains 2 li elements This is how you Yield_ = content.find(class_="recipe-time-yield") Title = clean(content.find(class_="recipe-title"))Īuthor = clean(content.find(class_="byline-name")) # This means find the first element which has the class "recipe-title" # This tells BeautifulSoup to parse the htmlĬontent = BeautifulSoup(f, "html.parser") # So we use the requests package to get the url this extension is # Pythonista cannot (or does not offer) getting the contents of a # This gets the URL this extension is called on Print('Running in Pythonista app, using test data.\n') # appex is the package which contains all the logic for being a share # This is mostly copied from the URL Extension template from Pythonista # enumerate gives us every item in a list and it's index # python figures out this is a String, so it will only allow you to # it can then be used anywhere inside this function (def()) # f-strings allow you to insert variables inside of strings # types of variables are not explicit in python, but they are enforced, Return unicodedata.normalize("NFKD", txt.get_text().strip()) # Found this online, NYTimes includes '\xa0' which is raw ascii ' ' Hopefully it will be a good reference! import clipboard I've included my script with a lot of comments. But, I encourage you to learn it! Perhaps it’s an answer to doing the same action as you’ve done here on your Mac. So, while you can use it to learn python, it would be very difficult to use without knowing python. Pythonista is just an editor for python with automation capabilities. Pythonista includes a package BeautifulSoup which makes it pretty easy to grab elements from html.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed